In this note I’d like to talk about one of the consistent sources of confusion for students learning calculus: Riemann sums. For many students, Riemann sums are those abstract irritations that crop up in the middle of proofs and can be safely ignored until they go away. (Of course, this is the issue with teaching integrals before sums.) In this article, I hope to give some additional context for Riemann sums, so that their role in mathematics (and the history of mathematics) can be made a bit clearer.

In this note I’d like to talk about one of the consistent sources of confusion for students learning calculus: Riemann sums. For many students, Riemann sums are those abstract irritations that crop up in the middle of proofs and can be safely ignored until they go away. (Of course, this is the issue with teaching integrals before sums.) In this article, I hope to give some additional context for Riemann sums, so that their role in mathematics (and the history of mathematics) can be made a bit clearer.

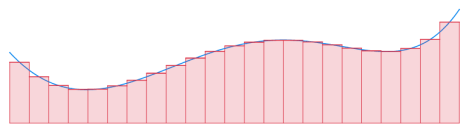

As a quick reminder, suppose that is a continuous function on the interval

. (Or any finite interval

, but the case

is the easiest to talk about.) To estimate the area under the curve

,

we approximate the region by a sum of non-overlapping areas that are easy to compute. There are a few options for this (rectangles, triangles, trapezoids, sectors, etc.), but for simplicity one often uses rectangles of uniform width. In the diagram above, an approximation using 20 rectangles of width 1/20 might look like:

In general, an approximation to the (signed) area between and the

-axis on the interval

using

rectangles of constant width

would take the form

in which is chosen so that

gives a “good” approximation to

on the interval

.

Here, “good” depends on the application at hand. If you’re trying to show that the Riemann sum converges to the definite integral, you might care about upper and lower bounds, in which case would be chosen such that

is a local max/min (resp.) for

in the interval

.

In other cases, “good” might mean taking such that some mean value property holds. (This is a typical step when proving the fundamental theorem of calculus or defining the differentials associated to different coordinates systems like polar coordinates.)

— RIEMANN SUMS IN PROTO-CALCULUS —

The most honest application of Riemann sums is undoubtedly numerical integration. This is a topic that will show up in any course in numerical analysis, but it’s often rushed in calculus classes that focus on closed-form integration instead.

And yet, when an closed-form anti-derivative is known for

, the (second) fundamental theorem of calculus gives

which means that Riemann sums are by now the “wrong” way to study simple definite integrals. (Riemann sums are a good way to motivate the integral area analogy, however.)

Stepping into our time machine, we can forget the fundamental theorem of calculus and go back to a simpler time when Riemann sums were used to compute definite integrals. (In these examples I’ll skip some of the details that justify convergence to the stated result.)

Example 1: Find the area under the curve over

.

Solution: The Riemann sum for an -term approximation to this integral using rectangles of width

is

Since it does not matter which we choose, we take a right endpoint approximation for each

. In other words, we choose

. The limit of our Riemann sums is then

in which we’ve used a closed-form expression for the partial sum of the first squares to execute the sum. The limit (and our answer for the area under the curve) is

.

A similar calculation (omitting limits and other justification) was performed as early as 1629 by the Italian mathematician Bonaventura Cavalieri (1598-1647). In particular, this result predated the fundamental theorem of calculus (in Newton’s form, say) by several decades.

Example 2: Compute the area under the curve over

.

Solution: Here, a right endpoint approximation and the geometric series gives

in which we’ve set . The limit is then

by l’Hôpital’s rule.

Example 3: Compute the area under the curve for

.

Solution: Rather than approach the entire range at once, we fix

and consider

over the range

. Furthermore, since left/right endpoint approximations using rectangles of uniform width give unapproachable sums, we are forced to embrace rectangles of variable width.

Choosing an -term subdivision with intervals of the form

gives the left endpoint approximation

which is a simple difference of geometric series! Summing and simplifying gives the explicit form

Taking gives the full range

and the final answer

.

Similar techniques can be used to compute areas under curves of the form for arbitrary

(as was done with minimal rigor by John Wallis), but some care needs to be taken in the case

. Our result in that case simplifies to

This last case was “proven” by Grégoire de Saint-Vincent (1647) in the sense that Saint-Vincent discovered and proved some basic properties of what we now call the logarithm (originally defined as an area). This result was put on more solid analytic footing by Nicholas Mercator in 1668. (Not to be confused with the cartographer Gerardus Mercator.)

Note — There is some general anachronism in these examples in that logarithms and exponentials were not well understood until after the first proofs of the fundamental theorem of calculus. Moreover, l’Hôpital’s rule (due to Johann Bernoulli in 1694) would not be published until 1696.

— FROM RIEMANN SUMS TO INTEGRALS —

While Riemann sums do a good job at motivating integrals, they do a terrible job at computing with them. (This is why we should be happy that the fundamental theorem of calculus exists.)

Once we realize this, we recognize that the utility of Riemann sums lies not in converting integrals to sums, but in converting sums to integrals. Perhaps this is why Riemann sums show up sparingly enough to be forgotten by students each time — they’ll be used to introduce the definite integral, the arc length formula, and little else.

It’s really only after calculus that Riemann sums creep back into mathematics, as a way to simplify sum calculations using integrals. For an example that’s appeared on this very blog, consider the following:

Theorem: The set of positive integers with a prime factor greater than

has natural density

.

Proof (sketch): The proof is given in full on my post concerning admissible orders for simple groups. By a simple counting argument and the prime number theorem, we reduce our problem to that of estimating

We divide the range into the

subintervals

and apply upper and lower bounds over these shorter intervals to show that

In the limit as , we evaluate these sums as left and right endpoint Riemann sums for the same integral. For example, the lower bound (and left endpoint approximation) gives

as desired.

You’ll also see Riemann sums as a way to translate discrete inequalities into integral inequalities. For a cute example of this, I present the following:

Example 4: Let be a continuous function on

. Prove that

Proof: Fix , and define

by

. A right endpoint approximation to the integral of

using rectangles of width

gives

By the AM-GM inequality, we have

Simplifying, we recognize this as a Riemann sum using rectangles of width :

which completes the proof.

— CONCLUDING REMARKS —

I hope that this handful of examples has hinted at the enduring role of Riemann sums beyond the scope of a typical calculus course. For a few more examples and applications, check out the exercises!

— EXERCISES —

Exercise 1: Find the definite integral of over

using Riemann sums and the angle addition formula for

. (Or by using the complex exponential.)

Exercise 2: Compute the following limit:

Hint: This problem uses a non-uniform division of the interval . The term

might tell you what partition was used.

Exercise 3: Show that the statement of Example 4 does not hold when is replaced by a general interval

.

Riemann sums are a mainstay of math contests as well, often in the guise of integral estimations. For example, the 1996 Putnam Exam includes the following problem:

Exercise 3 (Putnam B2, 1996): Show that for every positive integer ,

Hint — If you remember Stirling’s estimate for the factorial, it’s of little surprise that these bounds come from an integral estimate of the logarithm.

Pingback: Two Classic Problems in Point-Counting | a. w. walker·