These days, Blaise de Vigenère is best remembered for the Vigenère cipher, a classical cipher which saw wide use between the sixteenth and nineteenth centuries. This is actually a misattribution, as the Vigenère cipher was invented by Giovan Battista Bellaso in 1553. Still, Vigenère was a capable cryptographer, who invented his own cipher, the autokey cipher, in 1568. The autokey cipher was never used as widely as the Vigenère cipher, perhaps because small encryption/decryption errors could render the whole message illegible.

The autokey and Vigenère ciphers differ only in their method for producing a keystream, a long string of characters (equal to the length of the plaintext) generated by a short key. In the Vigenère cipher, the keystream is formed by concatenating the key over and over again, as in lemonlemonlemon… In the autokey cipher, the keystream is formed from the plaintext itself, prepended by a short key. For example, to encrypt classicalcryptography with the key lemon in the autokey cipher, our keystream is lemonclassicalcryptog. In either case, the ciphertext is formed by “adding” corresponding letters in the plaintext and keystream, by converting a,b,…,z to 0,1,…,25 and performing addition mod 26:

The Vigenère cipher fell out of use in the second half of the nineteenth century, following successful cryptanalysis by Charles Babbage in 1854 and Friedrich Kasiski in 1863. In essence, one notes that the Vigenère cipher acts as a series of interlaced shift ciphers. If the key has length k, the ciphertext breaks into k parts, with each part transformed by a part-specific shift. Thus letter frequency analysis can determine the shifts and the corresponding key letters.

It is natural to ask whether or not frequency-based statistical analysis can be used in cryptanalysis of the autokey cipher as well. Certainly the methods which produce good results in the Vigenère cipher no longer apply, since the keystream is aperiodic. In any case, it appears that very little has been written on this topic. Wikipedia, for instance, lists statistical attacks on Vigenère but relies on cribbing to cryptanalyze the autokey cipher. The goal of this note is to show two ways in which statistical analysis can be used to analyze autokey ciphers: as a means to identify them “in the wild” and as a means to decrypt them and recover keys.

— 1. IDENTIFYING AUTOKEY CIPHERTEXT —

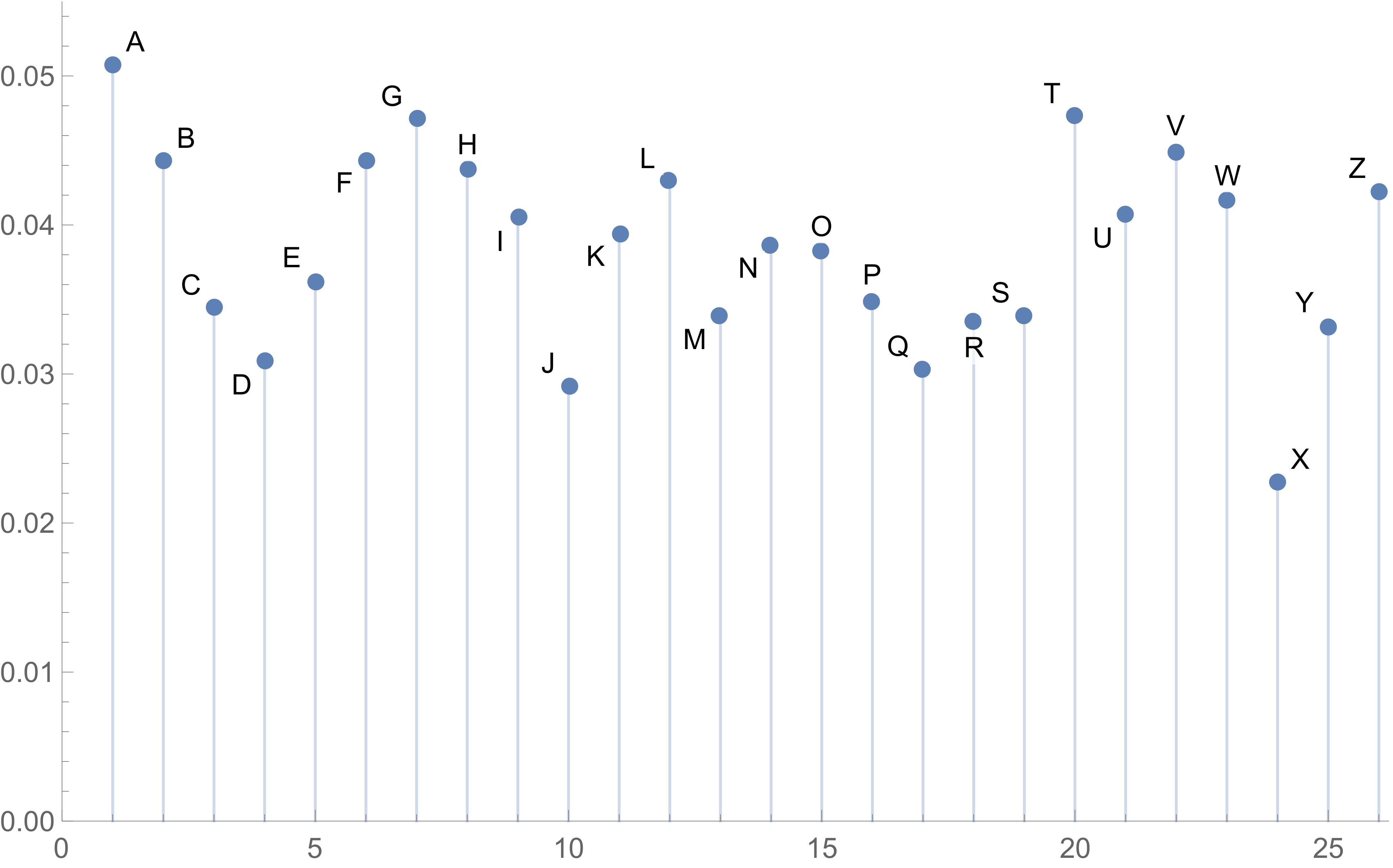

Our jumping-off point is the observation that the letter frequencies of English text are non-uniform. (This is the foundation of all frequency analysis.) Nevertheless, letters do follow a consistent distribution. In the autokey cipher, this implies that ciphertext follows the distribution of the sum (mod 26) of two English-distributed character sequences. The two streams are correlated, but this correlation grows quite weak once the key length exceeds a few letters, so we can ignore it in computations and assume that the streams behave as independent variables. Under this assumption, the following diagram shows the distribution of letter frequencies in an autokey ciphertext:

Just to be clear, this is a model for the letter frequencies of any sufficiently long and sufficiently standard autokey ciphertext. The distribution does not depend on the keyword, which is one of the ways in which the autokey cipher differs from the Vigenère cipher. More importantly, this letter distribution is fairly distinct, so a test could be used to test whether or not a given ciphertext is encrypted using the autokey cipher.

— 2. STATISTICAL CRYPTANALYSIS —

Now, given a ciphertext which is expected to have been encrypted using the autokey cipher, we discuss cryptanalysis. To motivate these ideas, consider the following example ciphertext:

If we expect that the autokey keyword is a common English word or phrase, we can apply a dictionary attack to save time over a brute-force keyspace attack. This requires an automated way to determine “English-ness” of the automated decryption, which we can accomplish using n-gram statistics. (I’ve found that trigrams are most useful for what follows.) A dictionary attack using 10,000 randomly generated keys of length between 3 and 10 drawn from a crossword dictionary identifies some keys as more probable than others; the top fifteen keys (as measured by trigram statistics) are listed below:

1. lysosome 6. bourgeon 11. wwii 2. beloveds 7. dreamgirls 12. lookpale 3. paragoge 8. regauged 13. altitude 4. runouton 9. brushpile 14. regorged 5. aquacade 10. damosels 15. dxii

The abundance of high-performing 8-letter words in our random sample suggests that the real keyword has length 8. The reason for this is not too hard to see: when our candidate keyword has the correct length k and at least one letter in the correct position, one out of every k letters in attempted decryption must be correct (and therefore English-like). Restricting to 8-letter keys produces a refined sample of near-keys. The fifteen highest-performing candidates are listed below:

1. hemostat 6. removals 11. lemonoil 2. lemaitre 7. lemonbar 12. letslide 3. lemonice 8. bemuzzle 13. lemurine 4. remoulds 9. prionace 14. remotely 5. demomode 10. remorses 15. demotist

The most common letters in each position form the string lemonide, which differs from lemonade in only one position. Decryption using the key lemonade recovers the plaintex:

In hindsight, we stress that our dictionary attack never found lemonade in our dictionary: the deduction was done merely by piecing together information from near-keys. In particular, we could reassemble the key by studying decryptions using randomized keys. Either way, high-performing keys should share letters with the actual key.

In our example, suppose that the key length is known to be 8. By testing random keys which begin with a and are otherwise random, we can determine a rough estimate of such keys. Replacing a with other characters allows us to rank the relative performance of different key starts. If we do this for each of the eight letters in our key, we produce the following table of rankings:

The leftmost column correctly identifies about half of the letters in the key, and the other letters can be derived from context or from partial decryption of the plaintext. We could also improve our results (at the cost of a lot of time) by looking at random keys with prescribed bigram pairs.

As with any statistical attack, our results improve quite a bit as the ciphertext increases in length. The example used here has 77 characters, which is probably on the low end of tractable given the key length. A good rule of thumb asks that the ciphertext be at least ten times as long as the key.