In 1946, S. Bochner published the paper Formal Lie Groups, in which he noted that several classical theorems (due to Sophus Lie) concerning infinitesimal transformations on Lie groups continue to hold when the (convergent) power series locally representing the group law was replaced by a suitable formal analogue. It was not long before this formalism found far-reaching uses in algebraic number theory and algebraic topology.

In 1946, S. Bochner published the paper Formal Lie Groups, in which he noted that several classical theorems (due to Sophus Lie) concerning infinitesimal transformations on Lie groups continue to hold when the (convergent) power series locally representing the group law was replaced by a suitable formal analogue. It was not long before this formalism found far-reaching uses in algebraic number theory and algebraic topology.

Unfortunately, few students see more than two or three explicit (i.e. closed form) group laws before stumbling into the deep end of abstract nonsense. In this article, we’ll see in a rigorous sense why this must be the case, providing along the way a complete classification of polynomial and rational formal group laws (over any reduced ring).

— PART I —

Following Bochner, a formal group law over a commutative ring (with unity) is a bivariate power series

such that the following two properties hold:

;

,

in which we borrow the O-notation to denote an element of the ideal

. On occasion, we’ll stress the fact that

is a formal group law by writing

. Then (2) clearly implies that

is an associative binary operation, while (1) states that

acts locally (i.e. to first order) like “normal” addition on

.

The reader may note that (1-2) fall a few axioms short of the well-known group axioms. Actually, though, no further axioms are needed: the existence of inverses (and identity) are consequences of (1-2). To be precise,

Proposition: Let be a formal group law. Then

;

- There exists

such that

.

Proof: An exercise in the (formal) Implicit Function Theorem.

Although we won’t need (or prove) this result, we note that all formal group laws over a ring of characteristic are commutative. (This result is sometimes known as Lazard’s Theorem.)

By one measure, the simplest of all formal group laws are those lying inside, i.e. those given by polynomials (versus formal power series). Here, two common examples come to mind:

Example 1: The simplest of all formal group laws is the additive formal group law, given by . Less obvious is the multiplicative formal group law,

, which gives – for example – the group law for multiplication on

under shifted coordinates.

These two examples have something in common: each is a member of the one-dimensional family

of formal group laws. And, as our first Theorem shows, these often exhaust the polynomial formal group laws over .

Theorem: Suppose that is reduced (i.e. has trivial nilradical), and let

be a polynomial formal group law over

. Then

for some

.

Proof: Suppose that is given by the polynomial

,

and let , the degree of

in

. Then

is at most

, with the coefficient of

equal to

.

Now, suppose that this coefficient is . Taking

, it follows that

is nilpotent. By the Exercises, it follows that

for all

. The nilradical of

is trivial by hypothesis, so that

for all

. This contradicts that

, so that

is exactly

. On the other hand, a quick calculation shows that

is at most

. Condition (2) forces

, and condition (1) gives

exactly.

Likewise, the identity yields to us that

. Thus

fits the form

,

whereupon condition (1) gives our result.

Thus, we see that polynomial formal group laws are unavoidably plain in the case of reduced rings. (The Exercises contain further examples of polynomial formal group laws, over non-reduced rings.)

— PART II (RATIONAL GROUP LAWS) —

Ever on the hunt for simple examples, we now turn our attention to formal group laws defined by rational functions. I mentioned previously that few students see more than three formal group laws expressed in closed form. This third example is often the following:

Example 2: Let be a commutative ring and define

,

considered as formal power series in . (If

is a field, we may view

as an element of

without incident. In general, though, we are studying the localization of

with respect to the multiplicatively closed set

.)

It can be shown that defines a formal group law over

. Readers may recognize two special cases of

, in that

;

.

(These may be obtained from each other via Osborne’s Rule.) As a remark, also gives a law for adding velocities (with unit

, the speed of light) in the framework of special relativity. (More information can be found here.)

Actually, the one-dimensional family of formal group laws (as well as the family given after Example 1) belongs to a two-parameter family

,

defined over any commutative ring . As our next Theorem shows, these often exhaust the rational group laws over

:

Theorem: Let be a reduced (commutative) ring. If

is a rational formal group law over

, then

for some constants

.

Note: This Theorem dates from 1976, in Rational Formal Group Laws, the doctoral dissertation of Robert Bismuth [1]. Unfortunately, his proof clocks in at around 30 pages, and – in my opinion – fails to address the material in a conceptual way.

Here, we present instead (a significantly expanded version of) a later proof, due to R. Coleman and F. McGuinness [3]. This proof, published under the by-now-familiar title Rational Formal Group Laws, holds when is a field of characteristic

. (The full proof may be thereafter obtained using techniques from [1].)

Proof: For the moment, let us assume that (a ring of characteristic

) is algebraically closed. Let

(a rational function), and define

.

With this, we define the -form

, which – as a claim – satisfies

. To see this, we recall the definition of the pullback:

To simplify this last expression, recall that . Applying

at

and setting

, it follows by the chain rule that

. Then, as

, we obtain

. The chain rule gives

, so that

,

as claimed. Next, let (resp.

) denote the set of poles (resp. zeros) of

. With the equation

, it follows that

and

. Moreover, viewing

as a branched cover

, we have

.

The right-hand side is bounded above by ; summing over

gives

.

The double sum at left is simply , since

preserves the order of poles/zeros. It follows that the inequality in the previous line is equality, i.e.

for all . This equality can be written suggestively as

, (1)

in which we note that our left-hand side is non-positive and our right-hand side is non-negative (as surjects). Thus each is

, and it follows that either

or

for all

(i.e.

admits only simple poles).

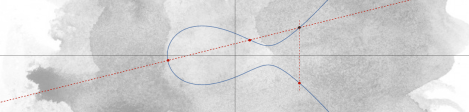

In this first case, is a linear fractional transformation fixing

(of infinite order, because

), so each power of

has unique non-zero fixed point. As

is finite and

, there exist integers

such that

, hence

is fixed by

. On the other hand, any fixed point of

is fixed by

, so that

fixes

by uniqueness. It follows that

is the unique pole of

. Likewise, we may show that

, since

. That is,

has one pole (of order at most

, by our condition on

) and no zeros, so that

, in which

is some linear fractional transformation of

fixing

.

In the second case, fix . Then

consists of a single point (by (1)), say

. A local calculation gives

. (2)

As in the previous case, there exists such that

(for some

). For this

, induction on (2) gives

, hence

(because

and

). If

is not a branch point, then

. Then

, a contradiction. Thus

(and similarly,

) is contained in the branch locus of

, so that in particular

. As

is non-empty (as

),

(by the Residue Theorem), so that

has two (distinct) poles and no zeros. It follows that

, in which

is a linear fractional transformation fixing

and

(as

is algebraically closed).

In either case, we may write : in the first case with

; in the second, with

. (I.e.

is isomorphic (see the Exercises) to either the additive or multiplicative formal group law on

.) Regardless, simplification yields

, (3)

with . This concludes our proof when

is algebraically closed. In the general case, fix an embedding of

into an algebraic closure, and consider which rational functions of the form (3) lie in

.

— PART III (ALGEBRAIC GROUP LAWS) —

At the rate we’re going, a better name for this post might be Formal Groups (And Where Not to Find Them). And so, to find the examples we seek, we shift our attention one final time: from rational formal group laws to algebraic formal group laws, group laws such that there exists a polynomial

satisfying

.

Example 3: Using the addition formula for sine, we see that the function

,

defines a formal group law over the ring . Moreover,

satisfies the following polynomial relation:

.

Thus is an algebraic formal group law over

.

Example 4: Fix a ring , and consider the set

.

For , multiplication (in the complex sense) gives a group operation on

, which makes

into a Lie group with identity

. Near the identity, we obtain a chart for

of the form

. Under these coordinates, the group law (

) takes the form

,

i.e. , where

is as in Example 3. That is, we have seen that

arises as the group law of an algebraic group (with respect to local coordinates). Consistent with the terminology of Bochner, a polynomial

of this form is said to be a formal algebraic group (cf. formal Lie group).

These two Examples paint a picture which is indicative of a general rule, established by R. Coleman in 1986 [2]:

Theorem: Let be algebraically closed field, of characteristic zero. If

is an algebraic formal group law over

, then

is algebraically isomorphic to a formal algebraic group.

Proof: Can be found here.

That is, not only do algebraic groups give rise to algebraic formal groups, all such formal groups (up to isomorphism) appear in this form. (The caveat “up to isomorphism” is necessary, as a formal power series need not converge.)

And so, we finally have an answer to our question: if you’re looking for “simple” (in an algebraic sense) examples of formal group laws, look to the theory of algebraic groups. Not only will you find some great examples (e.g. matrix groups and elliptic curves), you’d be hard-pressed to find anything quite as simple.

— EXERCISES —

Exercise: A homomorphism of formal groups over

is a power series

such that

. Show that a homomorphism

of polynomial formal groups over

exists if

. It follows that associate elements define isomorphic formal groups. Show that the converse need not hold. Hint: when is

defined over

?

Exercise: If is a homomorphism of polynomial formal groups over

and

is a polynomial, we’ll say that

is a p-homomorphism of polynomial formal groups. Similarly,

is a p-isomorphism if

admits a polynomial inverse. Show that the p-isomorphism classes of polynomial formal groups are classified by the equivalence classes of associate elements in

.

Exercise: Let be a commutative ring. Show that the nilradical of

is

. Hint: the inclusion “

” is easy; for the converse, proceed by induction on degree. (Solution can be found on Project Crazy Project.)

Exercise: Fix a nonzero integer , and let

denote the ring

. Show that

defines a polynomial formal group law, which is not of the form . Find a polynomial formal group law over the ring

, not of the form

.

Exercise: Given the result of the main Theorem of Part II, find necessary and sufficient conditions for each of the two cases therein to occur. Are rational formal group laws in general (rationally) isomorphic to the additive formal group law, or the multiplicative formal group law?

— REFERENCES —

[1] R. Bismuth, Rational Formal Group Laws, (1976).

[2] R. Coleman, One-Dimensional Algebraic Formal Groups, Pacific J. Math. 122, (1986), no. 1, 35-41.

[3] R. Coleman and F. McGuinness, Rational Formal Group Laws, Pacific J. Math. 147, (1991), no.1, 25-27.

Pingback: Revisiting the Product Rule | a. w. walker·

Pingback: Classify all solutions to $f(x+y) g(xy) = f(x) + f(y)$ – Mathematics – Forum·